What I’ve Learned:

“Zombie computer: beware the night of the living Dells.”

Zombies are kind of a big deal these days. If you’re a fan of TV or movies or video games, you’ve surely seen them — and like actual zombies, they’re still multiplying. It’s like somebody ran a zombie through a Dr. Seuss-ifier:

You’ve got fast ones and slow ones and now one with an ‘i’.

They crave brain, feel no pain and just want you to die.

There are zombies that walk and zombies that talk and zombies that grin like Fairuza Balk.

Some zombies dance and others fight plants and by now, one of them might be Jack Palance.

(Sorry. Too soon?)

The point is, zombies are everywhere in fiction — but they’re also everywhere in real life, in an insidious form you don’t often hear about. I’m talking about zombie computers, and there are millions upon millions of them just waiting to eat your… well, not brains, exactly. But probably your bandwidth. And these days, that’s just as bad.

A zombie computer — or just zombie, if you like — is a device that’s been taken over by a malicious user or bit of software, and now unquestionably does the bidding of its nefarious master. Once the machine is hacked into or infected with a virus or Trojan horse or computer worm, it can become a zombie without anyone around it ever knowing.

(Unlike zombie humans, zombie computers apparently don’t decompose, start to smell or shuffle down the street mumbling, “CPUUuuuuus, CPUUuuuUUUUSSss…” So they’re harder to identify.)

And while Dr. Frankenstein used his “zombie” to terrorize the townspeople or a voodoo priest might use a zombie army to, I don’t know, make a really big batch of jambalaya, maybe, controllers of zombie computers usually have much, much more sinister stuff in mind.

Like spam.

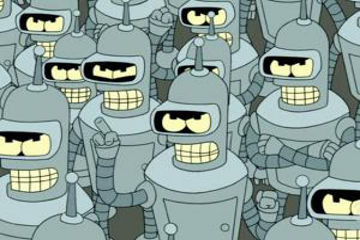

The puppet master of a bunch of zombie computers can coordinate them into something called a “botnet”, which is just a big gaggle of infected computers doing whatever they’re told. And some people tell them to send billions upon billions of junk emails to people all over the world.

Security experts estimate that roughly two-thirds of all email sent is “spam” of some kind, and much of that — up to eighty percent, according to one study — comes from zombie computers in botnets. It’s thought that a ten-thousand computer botnet — which is not particularly large; botnets have been seen with over one million zombie computers — can send up to fifty billion emails in a single week.

That’s “billion”, with a “b”. Kinda makes those zombie hordes on TV look like a couple of kindergarten kids, eh?

Of course, zombie computer masters can do worse than flood a few (billion) inboxes. Botnets can also be used to artificially generate hits on websites, to generate so many simultaneous hits that sites effectively shut down — known as a DDoS, or distributed denial of service attack, very nasty — identity theft, bank fraud, extortion, espionage and, of course, to recruit more victims. What good would a zombie computer be, if it didn’t reach out and bite a few uninfected innocents?

So enjoy the science fiction shows and films and games featuring “scary” zombies that can’t actually crawl out of the grave and get you. But be wary of that laptop or PC that you’re watching or playing on. That could be a real zombie, sitting in your very own living room. Maybe even on your lap.

EEEEEEEKKK!!